The whispers of artificial intelligence are no longer confined to the hushed halls of innovation labs; they echo through every facet of our lives, promising transformations that once belonged solely to the realm of science fiction. From self-driving cars navigating complex urban landscapes to sophisticated algorithms composing symphonies, the future of AI is a tapestry woven with threads of unprecedented possibility. We stand at the precipice of a technological revolution that promises not just to change what we do, but how we live, work, and even understand ourselves.

Across industries, the impact is undeniable.

Yet, amidst this dazzling array of advancements, a fundamental question emerges:

as AI becomes more pervasive, how do we ensure it remains human-centered?

Ultimately, how do we harness its immense power to amplify our best qualities, rather than diminish them?

The best way to determine the future of AI in mental health is by learning from other industries and applying those established insights.

As AI continues its inexorable march forward, a recurring theme is the evolution, rather than outright elimination, of human employment. While repetitive, data-intensive tasks are increasingly automated, the demand for uniquely human skills including creativity, critical thinking, emotional intelligence, and complex problem-solving, is simultaneously on the rise.

In industries like law, AI is not replacing lawyers but empowering them to analyze case histories, predict outcomes, and draft documents with unprecedented speed.

In journalism, AI can synthesize vast amounts of information for reports, freeing human journalists to focus on investigative storytelling and nuanced analysis.

Even in creative fields, AI tools are becoming collaborators, assisting artists in generating new ideas, musicians in composing melodies, and designers in visualizing concepts.

Customer service, often a point of frustration, is being enhanced by AI chatbots that can resolve common issues instantly, reserving complex human interactions for truly empathetic and specialized care.

This evolution extends to how entire industries function. Supply chains, once vulnerable to unpredictable disruptions, are becoming resilient through AI-driven predictive analytics. The transformation is not merely about efficiency; it's about reshaping the fundamental value proposition of human-led work, allowing individuals to ascend to higher-order tasks that require cognitive flexibility and emotional depth. This broader shift sets the stage for how mental healthcare, too, will redefine its roles and processes in an AI-infused world.

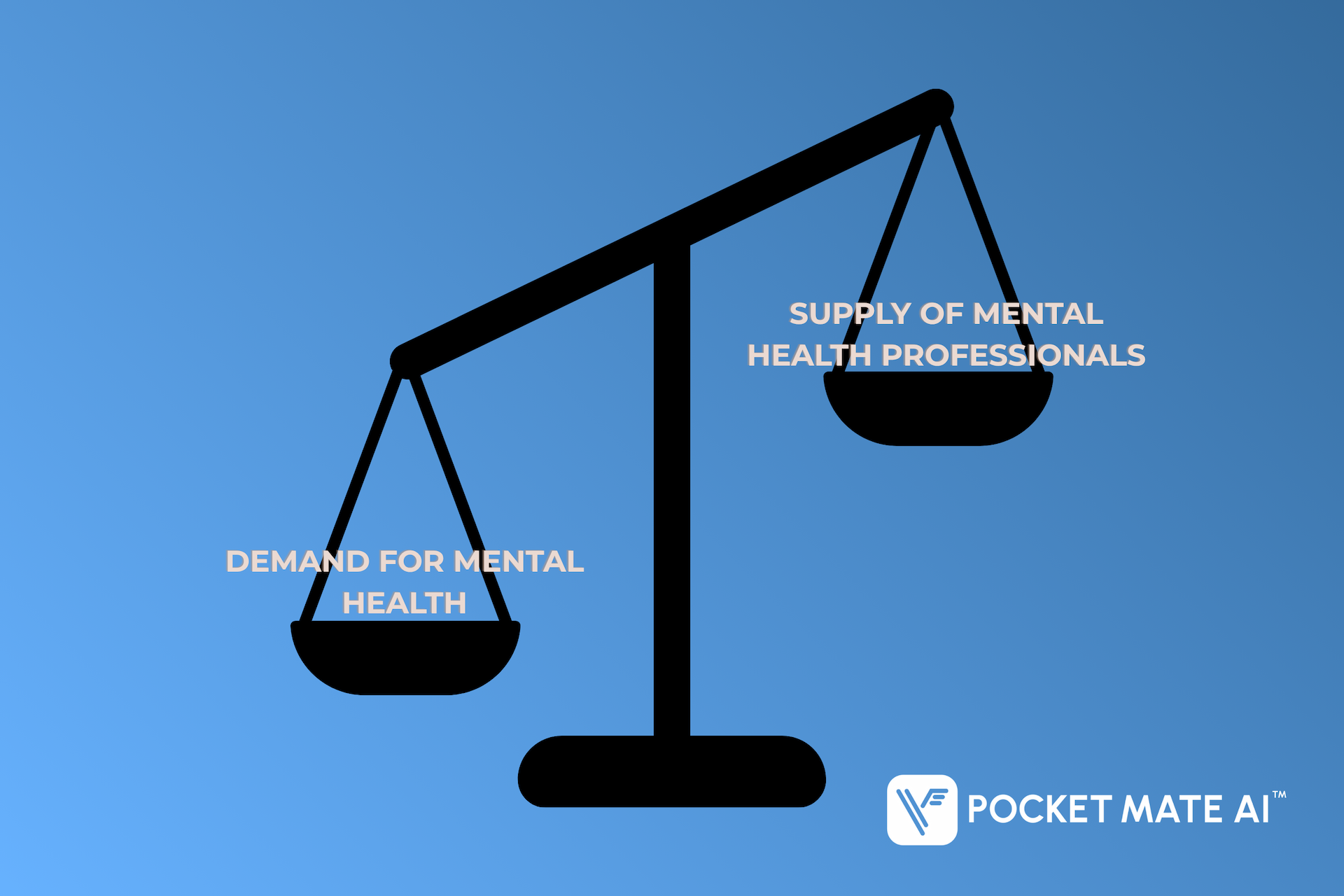

The mental health sector is ripe for disruption, not because human care is lacking, but because demand vastly outstrips supply. Global statistics paint a stark picture: millions suffer from mental health conditions, yet access to qualified professionals remains a significant barrier due to cost, geographical limitations, and pervasive stigma.

This is where the future of AI offers a transformative vision, shifting the industry from a reactive, often inaccessible model to a more proactive, democratized, and efficient one.

The administrative workload is a leading cause of burnout for human clinicians, diverting their valuable time away from patient interaction. AI offers a powerful solution by managing the rote tasks that consume hours each week:

The initial impact of AI is to shift the industry from a reactive approach (treating crises) to a proactive one (fostering continuous wellness).

For millions, the decision to seek care is blocked by financial cost, perceived stigma, or long waitlists. AI addresses this by ensuring support is always within reach:

These digital companions ensure that users are never alone in their journey. The tools provide a consistent, supportive environment for practicing essential skills:

The promise of AI in mental health is vast, but it is not without its perils. A robust discussion of the future of AI demands a frank acknowledgement of these risks, alongside strategies to mitigate them.

The most pressing concern in mental health AI is the sensitive nature of personal data. Users share their deepest thoughts and vulnerabilities.

AI models, if not properly trained and constrained, can generate incorrect, irrelevant, or even harmful information: a phenomenon known as "hallucinations."

AI, by its very nature, lacks consciousness, lived experience, and the capacity for genuine human empathy.

While AI aims to democratize access, a digital divide still exists, where segments of the population lack access to reliable internet or smart devices.

The path to a brighter future of AI in mental health is paved by companies committed to ethical innovation, transparent practices, and user well-being above all else. This requires more than just good intentions; it demands concrete actions and robust policy frameworks.

Industry leaders are distinguished by their unwavering commitment to:

AI Listener stands as a testament to this human-centered vision of the future of AI. We are not just building technology; we are building bridges to better well-being. Our approach embodies the principles of amplification and democratization:

| Amplifying Human Capacity | Democratizing Access |

|---|---|

| AI Listener handles the foundational tasks of mood monitoring, structured self-reflection, and initial support, providing therapists with invaluable insights into a client's daily emotional landscape between sessions. This allows clinicians to focus their precious time on the deep, nuanced therapeutic work that only a human can perform. We provide the data, they provide the soul. | By offering a 24/7, accessible, and non-judgmental platform, AI Listener removes critical barriers to care. It’s a low-threshold entry point for individuals grappling with mild to moderate stress, anxiety, or low mood, ensuring that support is always just a tap away, regardless of location or financial constraint. |

Our commitment to high-level data policies is unwavering. AI Listener implements stringent HIPAA-compliant protocols, robust encryption, and anonymization techniques to safeguard every user's personal information. We believe that true innovation in mental health AI must be built on a foundation of absolute trust and ethical responsibility.

The future of AI is here, and its potential to revolutionize mental health is immense. By embracing AI not as a replacement for human connection, but as a powerful amplifier and democratizer of access, we can build a more compassionate, efficient, and equitable mental wellness ecosystem.

From assisting therapists to reach more people, to providing immediate, non-judgmental support to those in need, AI is proving to be a valuable ally in our collective journey toward better mental well-being. Companies like Pocket Mate are leading the charge, demonstrating that with responsible development, transparent practices, and a steadfast commitment to human-centered design, the future of AI can indeed be a brighter one for mental health. Explore how AI Listener is shaping this future at www.pocketmate.ai and discover how AI can enhance your mental health journey.

**NOTE: AI Listener is not a crisis center. If you need immediate support, please contact the National Suicide Crisis Prevention Hotline: Call 988, The National Suicide Prevention Lifeline: 800-273-8255, Crisis Text Line: 741741

Copyright © 2025 Pocket Mate AI TM